- by theguardian

- 21 Sep 2023

Google engineer says AI bot wants to �serve humanity� but experts dismissive

Google engineer says AI bot wants to �serve humanity� but experts dismissive

- by theguardian

- 16 Jun 2022

- in technology

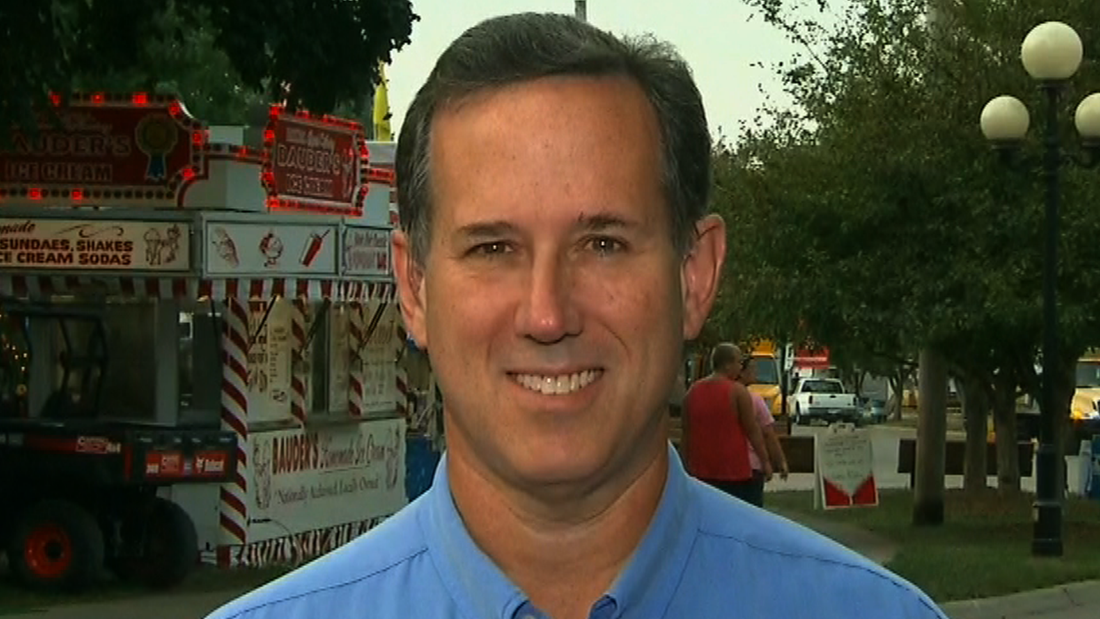

The suspended Google software engineer at the center of claims that the search engine's artificial intelligence language tool LaMDA is sentient has said the technology is "intensely worried that people are going to be afraid of it and wants nothing more than to learn how to best serve humanity".

The new claim by Blake Lemoine was made in an interview published on Monday amid intense pushback from AI experts that artificial learning technology is anywhere close to meeting an ability to perceive or feel things.

The Canadian language development theorist Steven Pinker described Lemoine's claims as a "ball of confusion".

"One of Google's (former) ethics experts doesn't understand the difference between sentience (AKA subjectivity, experience), intelligence, and self-knowledge. (No evidence that its large language models have any of them.)," Pinker posted on Twitter.

The scientist and author Gary Marcus said Lemoine's claims were "Nonsense".

"Neither LaMDA nor any of its cousins (GPT-3) are remotely intelligent. All they do is match patterns, draw from massive statistical databases of human language. The patterns might be cool, but language these systems utter doesn't actually mean anything at all. And it sure as hell doesn't mean that these systems are sentient," he wrote in a Substack post.

Marcus added that advanced computer learning technology could not protect humans from being "taken in" by pseudo-mystical illusions.

- by travelpulse

- descember 09, 2016

Resort Casinos Likely Scuttled Under Amended Bermuda Legislation

Premier announces changes to long-delayed project

read more